Artificial Intelligence

Transfer Learning is a good and popular approach in deep learning in which pre-trained models are used as starting point on computer vision (CNN) and natural language processing (NLP) tasks. It is popular in deep learning given the enormous resources required to train deep learning models or the large and challenging datasets on which deep […]

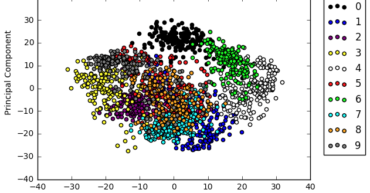

#CellStratAILab #disrupt4.0 #WeCreateAISuperstars CellStrat AI Lab is engaged in incredible AI innovations and product development activity. The sophistication of our AI Lab members’ presentations continues to rise week after week. Last Saturday AI Lab started with an interesting session on Image Descriptors, Feature Descriptors & Feature Vectors by Sonal Kukreja. In computer vision, image descriptors are descriptions of the visual […]

Artificial Intelligence applications are taking over the world in almost all the possible areas known and to for artificial intelligence to be developed, machine learning lrequires lots and lots of training data, more the better. So large data sets are though useful but on the flip side, using a large data set has its own […]

#CellStratAILab #disrupt4.0 #WeCreateAISupertars Last Saturday, CellStrat AI Lab Team Lead Niraj Kale presented an intuitive hands-on workshop on Face Recognition with MTCNN and FaceNet algorithms. The session included a theory presentation along with an extensive hands-on code workshop. This model has two networks at play. First, the MTCNN localizes the face by creating a bounding […]

Artificial intelligence is growing exponentially. With an estimated market size of 7.35B US dollars. McKinsey predicts that AI techniques (including deep learning and reinforcement learning) have the potential to create between $3.5T and $5.8T in value annually. Reinforcement learning (RL) is an area of machine learning concerned with how software agents ought to take actions […]

Models #pretrained on domain/application specific corpus — #BioBERT (biomedical text), #SciBERT (scientific publications), #ClinicalBERT (clinical notes). Training on domain specific corpus has shown to yield better performance when fine-tuning them on downstream #NLP tasks like #NER etc. for those domains, in comparison to fine tuning #BERT (which was trained on BooksCorpus and Wikipedia)

Attention is the simple idea of focussing on salient parts of input by taking a weighted average of them, has proven to be the key factor in a wide class of #neuralnet models. #MultiheadAttention in particular has proven to be reason for the success of state-of-art natural language processing (#NLP) models such as #BERT and […]